Closing the Judgment Gap

Framework | March 2026 | Week 2 | EQ in Action Series: Establish Judgment Boundaries

This is the second post in a four-part series following Maya, a composite VP of Marketing navigating the governance failures that surface when AI content workflows scale faster than judgment boundaries do. Each post stands on its own. In Week 1, a competitive comparison asset generated through Maya’s AI-assisted content workflow surfaced on LinkedIn before anyone on her team realized it had been published. The post contained outdated factual claims about a named competitor. This week: the framework she needed before that happened.

The audit

Maya spent the weekend after the LinkedIn thread doing what most VPs of Marketing do after a public failure: auditing backwards. She pulled every AI-assisted workflow her team ran. Twelve of them.

At first glance, the competitive comparison workflow looked like the others. It had a review step and a quality check, which meant that on paper, the process worked. Several members of her team had already pointed this out in the initial conversations. The system did what it was designed to do.

That explanation held up until Maya looked more closely at what the review step was actually supposed to accomplish.

She realized the workflow included a review step, but it lacked a standard for what review meant for that content category. Her team treated it as a quality check when the situation called for a judgment call. The AI workflow never distinguished between those two things.

The question her workflow never asked

Most AI content workflows ask two questions before they publish: is the content accurate, and is it on brand?

For routine content, those are the right questions. For content that carries brand, legal, or competitive risk, they are incomplete.

The question missing from Maya’s workflow was: Does this decision belong to AI, or to a human?

Without that question, every piece of content moved through the same gate regardless of what was at stake if it was wrong. A blog outline and a competitive positioning claim require very different levels of human oversight. Maya’s process treated them identically.

What her team needed was a way to classify those decisions before the workflow began.

Classification has to happen before review. The review step existed, but the classification standard did not. That distinction turned out to be the difference between a functioning process and one that quietly allowed risk through the system.

A framework for drawing the line

Maya’s team had already built a review step into the workflow. What the process lacked was a classification standard that defined which decisions required human judgment before the content entered the review stage.

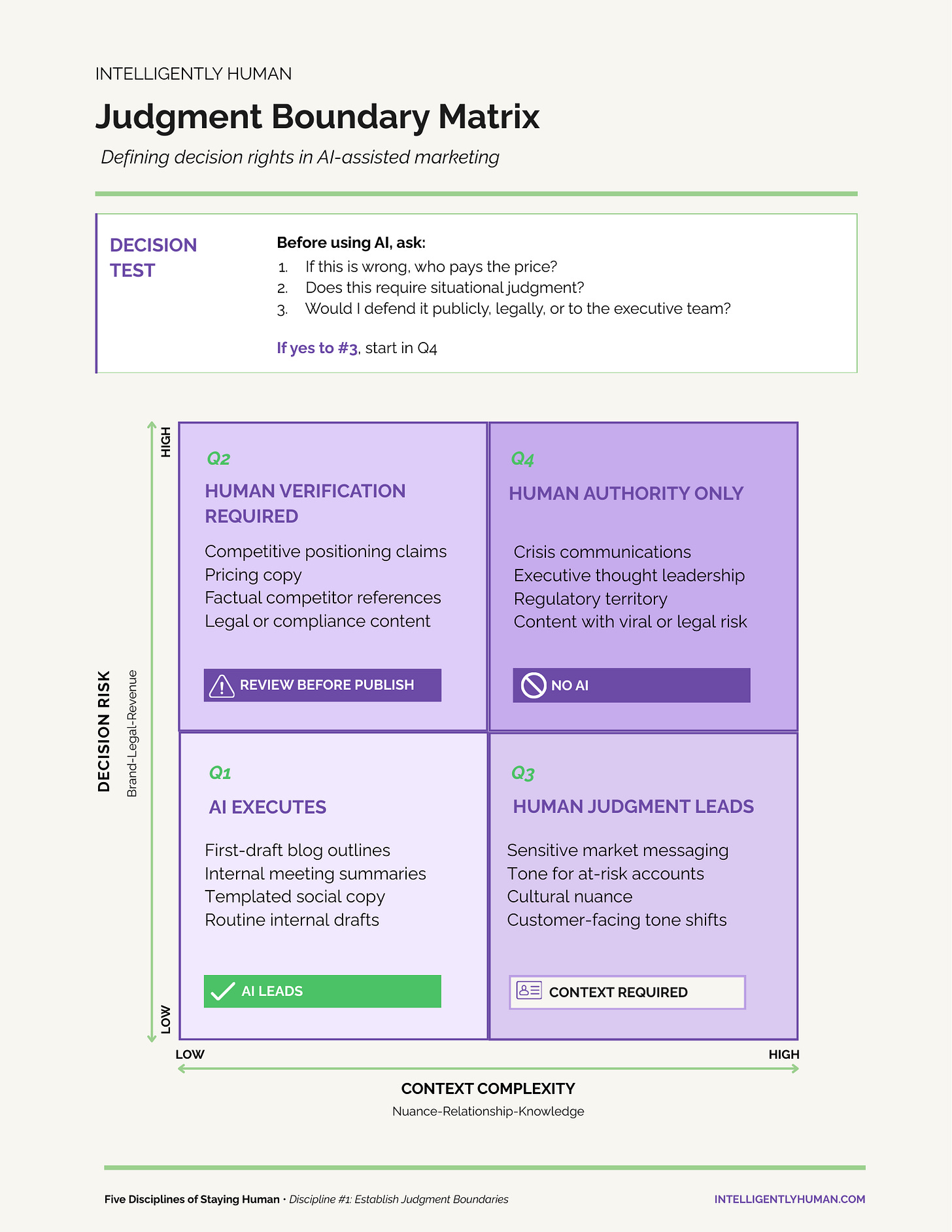

The Judgment Boundary Matrix is designed to solve that problem.

The framework gives marketing teams a repeatable way to classify AI-assisted content decisions before they enter the review process. It maps decisions across two factors: how much is at stake if the content is wrong, and how much contextual judgment the decision requires. The intersection of those two dimensions determines who owns the decision.

Use the matrix to classify the decision before the workflow reaches the review stage.

Each quadrant defines a different level of human authority in the workflow.