They Were Using AI. They Just Weren’t Trusting It.

How a B2B SaaS CMO learned that 87% AI adoption doesn't mean anything if no one trusts the output

The campaign review that changed everything

The senior marketer presenting had clearly done her homework.

Alysa, the CMO, was reviewing an AI-informed recommendation for their annual user conference campaign—a six-figure investment targeting enterprise IT decision-makers. The AI agent had analyzed three years of behavioral data and identified a new account segmentation pattern: companies experiencing rapid headcount growth showed 3x higher likelihood to register, regardless of industry vertical.

The analysis looked solid. The data was clean. The logic was sound.

Alysa asked her team, “What do you think? Should we reallocate budget toward this segment?”

The room went quiet.

The senior marketer—someone Alysa had personally recruited, someone who’d run enterprise campaigns for over a decade—said:

“The recommendation makes sense. The data supports it. But I’m not confident enough to defend it to the sales team if it underperforms.”

That admission cracked everything open.

AI was working. But the team couldn’t rely on it when they couldn’t fully explain how it got to its recommendations.

What she saw beneath the surface

Alysa is the CMO of a mid-sized B2B SaaS company selling infrastructure software to enterprises. Think 12-18 month sales cycles, $200K+ ACV, buying committees of 6-8 people, and deals that live or die on trust.

By early 2026, her team had AI agents embedded across:

Content operations: Drafting solution briefs, case studies, and technical documentation for different buyer personas

Campaign orchestration: Managing account-based campaigns across multiple stakeholders

Analytics: Analyzing pipeline influence and content engagement across long buying journeys

Customer communications: Personalizing onboarding sequences, expansion plays, and renewal campaigns

The technical work was done. AI agents were activated. Training was complete. Her dashboard said 87% adoption.

But in that campaign review, she finally saw what 87% adoption actually meant.

In our coaching session after the meeting, Alysa asked me: “How do I know if they’re actually using the agents’ outputs, or just using the tools to meet our adoption goals?”

“Walk me through what you’re seeing,” I said.

“People are activating the tools. Training completion is high. Content volume is up. But when I dig into how decisions are actually being made, I get evasive answers. ‘The tools are helpful.’ ‘We’re learning.’ ‘The agents give us good options.’ Then they change the subject.”

Me: “What does that tell you?”

Alysa: “That they’re complying. Not trusting.”

She was right.

What 87% adoption actually meant

Over the next few weeks, I talked to Alysa’s leadership team which included directors, senior managers, content leads, and campaign managers. What I heard wasn’t resistance to AI. It was something more concerning.

They were using the agents—then redoing the work manually.

A content director told me:

“I run the agent to generate a technical whitepaper for CISOs. Then I rewrite 80% of it after hours because I can’t tell if it’s positioning our differentiation correctly. What if a prospect shares it with their evaluation team and it sounds generic?”

A campaign manager:

“The agent recommends shifting the budget from our CFO nurture campaigns to our CIO campaigns based on engagement patterns. The logic makes sense. But I don’t know how to defend that to the sales team if the pipeline from CFOs drops. So I just...don’t do it.”

A senior marketer:

“I activated the agent. I generate content with it. I just never ship anything without completely reworking it first. The agent is fast, but I’m the one who has to answer to the VP of Sales when a campaign doesn’t generate qualified pipeline.”

A customer marketing lead:

“We’re using agents to personalize renewal campaigns. But every email gets manually reviewed by me and our CS leader because one wrong message to a $500K customer could kill the renewal. The agent saves time on drafts. It doesn’t save me from accountability.”

This is what 87% adoption looked like beneath the surface: people performing AI adoption for the dashboard while doing the actual work the old way—or double the work.

The team was adopting AI, but with zero confidence. They were terrified to rely on it.

The three questions no one could answer

Alysa’s team understood AI. The problem was that they were being asked to make decisions they couldn’t defend.

In B2B SaaS marketing, you’re accountable to Sales when the campaign pipeline is weak, to Product when positioning doesn’t resonate in competitive deals, to the executive team when a repositioning effort doesn’t move win rates, and to Customer Success when expansion messaging falls flat.

Your judgment is constantly scrutinized. Every campaign decision gets interrogated in pipeline reviews. Every positioning choice gets stress-tested in deal debriefs. And the teams you support start believing they can do marketing better than you—the seasoned marketing expert.

Now add AI agents into that equation, and suddenly you’re approving decisions shaped by systems you don’t fully control—decisions you’ll need to defend in rooms where “the AI said so” isn’t an acceptable answer.

Alysa’s directors kept circling back to three anxiety-inducing questions:

“Do I trust this recommendation, or should I pressure-test it?”

An agent analyzes three years of pipeline data and recommends deprioritizing the healthcare vertical in favor of financial services companies with recent M&A activity. The pattern makes sense statistically. But your sales leader has relationships in healthcare. Your last three case studies are healthcare logos. Do you:

Trust the data and reallocate budget (and own the outcome if healthcare deals dry up)?

Second-guess the recommendation and keep the current strategy (and become the AI bottleneck)?

Spend three days validating the agent’s work (and slow everything down)?

“Is this something I approve, or do I escalate?”

An agent suggests updating your homepage messaging from “enterprise-grade infrastructure” to “infrastructure that scales with enterprise growth” based on A/B test performance and win/loss interview analysis. It’s a small change. But repositioning is strategic. The line between “you have autonomy on this” and “you should have flagged this” is invisible. So every decision feels high-stakes.

“If this underperforms, is that on me or the model?”

An agent-generated ABM campaign targets accounts based on behavioral signals (product usage patterns from trial users) rather than traditional firmographics (company size, industry). It performs 30% better in early metrics. But three months later, only 20% of those accounts convert to sales conversations, and Sales is frustrated because the accounts “don’t fit our ICP.”

Who’s accountable? The marketer who approved the targeting? The person who didn’t catch that the agent was optimizing for engagement, not conversion? The data science team that trained the model?

When those questions don’t have clear answers, hesitation becomes the rational choice.

What Alysa had to change

Alysa had done what strong leaders do during transformation:

Set a clear direction

Communicated urgency

Projected confidence

But her confidence in the AI strategy was being interpreted as finality, not support.

One of her directors later told me: “It felt like her expectation was acceptance, not open discussion about when to trust (and not to trust) AI outputs. I didn’t want to be the one slowing things down.”

Alysa realized she’d optimized for speed. What her team actually needed was clarity about what happens when things go off the rails.

She’d confidently communicated the strategy. But they needed to know she had their backs.

The shift:

From: “Here’s the AI roadmap—now execute it”

To: “Here’s how we make judgment under uncertainty safe”

Practice 1: She drew the lines

Alysa’s first move was to stop saying “use your judgment” and start defining where judgment lived.

She spent a week mapping every major marketing workflow and putting each decision into one of three categories:

Agent-led, human-approved:

Draft content (whitepapers, solution briefs, technical docs, case study first drafts)

A/B test recommendations (subject lines, CTA copy, email send times)

Performance reporting (campaign dashboards, pipeline attribution, content engagement)

SEO optimization (keyword recommendations, meta descriptions, blog outlines)

Agent-generated, human-validated:

Segmentation hypotheses (new ICP patterns, propensity models, account scoring)

Budget reallocation suggestions (channel mix, campaign investment, spend optimization)

Campaign optimization plays (messaging variants, audience targeting adjustments, bid strategies)

Content repurposing recommendations (turn webinar into blog series, extract social snippets)

Human-owned:

Positioning and messaging strategy (differentiation claims, value propositions, category positioning)

Customer promises and product claims (ROI statements, capability assertions, competitive comparisons)

Brand narrative decisions (company story, executive thought leadership, crisis communications)

Strategic partnership messaging (co-marketing campaigns, joint value propositions)

High-value account personalization (enterprise deal-specific campaigns, executive outreach)

She published it as a one-page guide: “When AI Leads, When Humans Decide.”

What she expected: Pushback. “This will slow us down. This is too restrictive.”

The reality: Relief.

“I’ve been guessing for three months where I had discretion and where I didn’t. I was spending 10 hours a week revalidating agent recommendations because I didn’t want to make the wrong call. Thank you for just telling me.”

- Campaign Manager

Her content director: “Now I know the agent owns first drafts and research, and I own differentiation and voice for enterprise buyers. That’s clear. I can move faster now.”

Once Alysa’s team knew exactly where they were accountable and where AI was accountable, decisions sped up. They weren’t carrying the weight of every decision alone.

Practice 2: She named what everyone feared

Early on, Alysa’s messaging was all about momentum: “AI is transforming how we work. We’re moving fast. This is the future.”

What she wasn’t saying: some of them were scared.

In her next leadership meeting, she changed her approach.

“We’re introducing systems that will sometimes be wrong. What matters isn’t perfection, it’s how we surface problems and learn from them.”

Then she made three commitments:

1. Raising concerns won’t be seen as resistance.

If you think an agent recommendation is off, say so. That’s judgment, not obstruction.

2. Mistakes surfaced early will be treated as learning.

We’re not penalizing people for catching AI errors. We’re rewarding it.

3. Silence is riskier than slowing down.

If you’re not sure, ask. If something feels wrong, flag it. Don’t wait.

The team’s attitude shifted.

One director who’d been quiet for weeks asked: “What happens if I override an agent’s recommendation and I’m wrong?”

Alysa: “Then we figure out why your judgment differed and whether we need to better understand how the agent came to that recommendation. You’re not wrong for exercising judgment. That’s your job.”

Naming the fear made it manageable.

Practice 3: She told them what AI can’t do

As agents took on more execution, Alysa noticed something troubling: her team was starting to defer judgment, not just tasks.

In a messaging review, someone presented an agent-generated value proposition for their new product module. When Alysa asked about the positioning choice, the marketer said: “The agent recommended this framing based on competitive analysis and win/loss data, so I went with it.”

Not: “The agent suggested this, and here’s why I think it resonates with enterprise infrastructure buyers.”

Alysa realized she’d accidentally sent a signal that AI outputs were answers instead of inputs. She course-corrected fast.

In her next all-hands, she said:

“AI can draft a technical whitepaper in two hours instead of two weeks. It can analyze three years of pipeline data across 50 variables to find patterns we’d miss. It can generate a hundred messaging variations and predict which will perform better based on historical engagement.

But it can’t understand the anxiety a CFO feels when evaluating a $300K infrastructure decision during a budget freeze. It can’t make the call about whether our messaging is technically accurate but misses the emotional reality of our buyers’ risk aversion. It can’t tell when we’re optimizing for this quarter’s pipeline metrics at the expense of long-term brand positioning that wins seven-figure enterprise deals.

It can’t sit in a sales call and hear the hesitation in a CIO’s voice when they ask about our roadmap. It can’t read the room when a champion goes quiet because their internal initiative lost executive sponsorship.

That’s your expertise. And it’s more valuable now, not less.”

Then she changed how her team reviewed work.

Old way: “Here are the results from the agent.”

New way: “Here’s what the agent recommended, here’s my reasoning for accepting or adjusting it based on what I know about enterprise buying behavior, and here’s the outcome.”

She made judgment visible again. Required it in campaign reviews, content approvals, and strategy presentations.

Her team stopped seeing AI as a replacement and started seeing it as a tool that amplified the judgment they were hired for.

Her senior demand gen lead told her later: “I thought AI meant my expertise mattered less. You helped me see it means my expertise matters more, because now I can focus it on the decisions that actually drive enterprise pipeline, not on generating the 47th draft of a nurture email.”

Practice 4: She made trust a system, not a vibe

Alysa realized early on that “trust me” wasn’t a scalable strategy.

Her team didn’t doubt her intentions. They doubted the system would protect them when something went wrong. So she stopped talking about trust and started building it.

She created a one-page AI policy that answered:

What can agents do without approval?

What requires human signoff?

What data is off-limits for prompting?

Who’s accountable when outputs fail?

She assigned ownership:

Every AI agent got assigned a human “owner”—the person responsible for its outputs.

Example:

Content Generation Agent

Owner: Senior Content Manager

Can: Draft blogs, social posts, email copy

Cannot: Publish without review, make product claims, access customer data

Escalate to: Content Director (brand questions), Legal (compliance questions)

She built escalation paths:

“If the agent is confident but you’re not, escalate to [specific person]. You won’t be penalized for raising concerns. You’ll be rewarded for catching issues early.”

The shift was immediate.

People stopped wondering if they could question an AI recommendation. They knew exactly how to escalate and who would back them up.

Trust became operational. And once it was operational, it scaled.

Practice 5: She learned when to say no

The board loved Alysa’s AI momentum. They wanted more.

“Can we automate approval workflows for low-stakes content?”

Alysa said no.

“We’re keeping humans in the approval loop, even for routine work. The moment we remove human judgment entirely, we’ve told the team their expertise doesn’t matter. In B2B, where trust and nuance drive buying decisions, that’s not a trade I’m willing to make.”

She also paused several agent use cases that looked innovative but didn’t demonstrate clear value:

An agent that “optimized” email subject lines but actually just made them more generic

A campaign orchestration feature that added complexity without improving outcomes

A content repurposing tool that saved time but stripped strategic context

The impact:

Her team noticed. They started trusting the remaining initiatives more, because Alysa had demonstrated discernment, not just enthusiasm.

One director told me: “When she said no to that automation, I realized she actually understands what we do. She’s not just chasing AI for the sake of AI.”

Strategic restraint became a signal of mature leadership.

What changed

Within 90 days, Alysa’s team looked different.

Trust indicators increased:

“Is this allowed?” questions dropped by 65%

Directors brought her judgment calls, not permission requests

Team members started voluntarily sharing “what I learned from the agent today” in weekly standups

Override rates stabilized at 15-20%, reflecting healthy skepticism, not fear

Escalations increased 40% (in a good way). People felt safe raising concerns early

Performance outcomes improved:

Content output increased 40% without quality degradation

Campaign cycle time from concept to launch reduced by 30%

Enterprise deal content (case studies, ROI calculators, technical documentation) shipped 2x faster

Pipeline influence attribution became 50% more accurate (agents analyzing multi-touch across long sales cycles)

Engagement metrics improved across all buyer personas (personalization was finally working)

Compliance incidents with AI-generated content: zero

Team dynamics shifted:

The directors who’d been quiet in meetings started pushing back again with strategic challenges

Manager effectiveness scores increased 25%

Retention stabilized—two key people who’d been exploring external opportunities stayed

Cross-functional tension with Sales decreased (clearer communication about why certain accounts were targeted in campaigns)

“I thought I had to choose between speed and trust. I was wrong. Once I built trust, speed followed naturally. My team wasn’t slowing me down with questions—they were paralyzed by ambiguity. The boundaries freed them to move faster than they ever had, because they knew exactly where they owned decisions and where they could rely on agents.”

— Alysa, CMO

Why B2B SaaS Makes This Harder

Here’s what Alysa learned that most CMOs don’t talk about:

In B2B SaaS, the cost of broken trust is catastrophic.

When you’re selling enterprise infrastructure software with 12-18 month sales cycles and six-figure deals, marketing isn’t just generating leads. It’s building credibility across an entire buying committee.

One generic-sounding whitepaper that doesn’t speak to a CISO’s actual concerns can kill a deal three months into the evaluation.

One poorly personalized email to a champion can make you look like you don’t understand their business.

One messaging shift that sales can’t explain to prospects creates friction that slows the entire pipeline.

Your buyers are few. Your sales cycles are long. Your brand is your primary asset.

Every piece of content, every campaign, every message carries weight. There’s no “move fast and break things” in enterprise SaaS marketing. There’s “get it right because you only get a few at-bats with each account.”

Leadership hesitation in B2B SaaS isn’t bureaucracy. It’s risk management in an environment where trust takes months to build and seconds to break.

And when that hesitation isn’t addressed explicitly with clear boundaries and operational trust systems, it spreads across the team and stalls everything.

“I kept thinking my team was being too cautious. But they weren’t. They were being appropriately careful in a context where mistakes are expensive and visible. My job wasn’t to make them less careful—it was to give them the systems that made it safe to move fast.”

— Alysa, CMO

What This Means for You

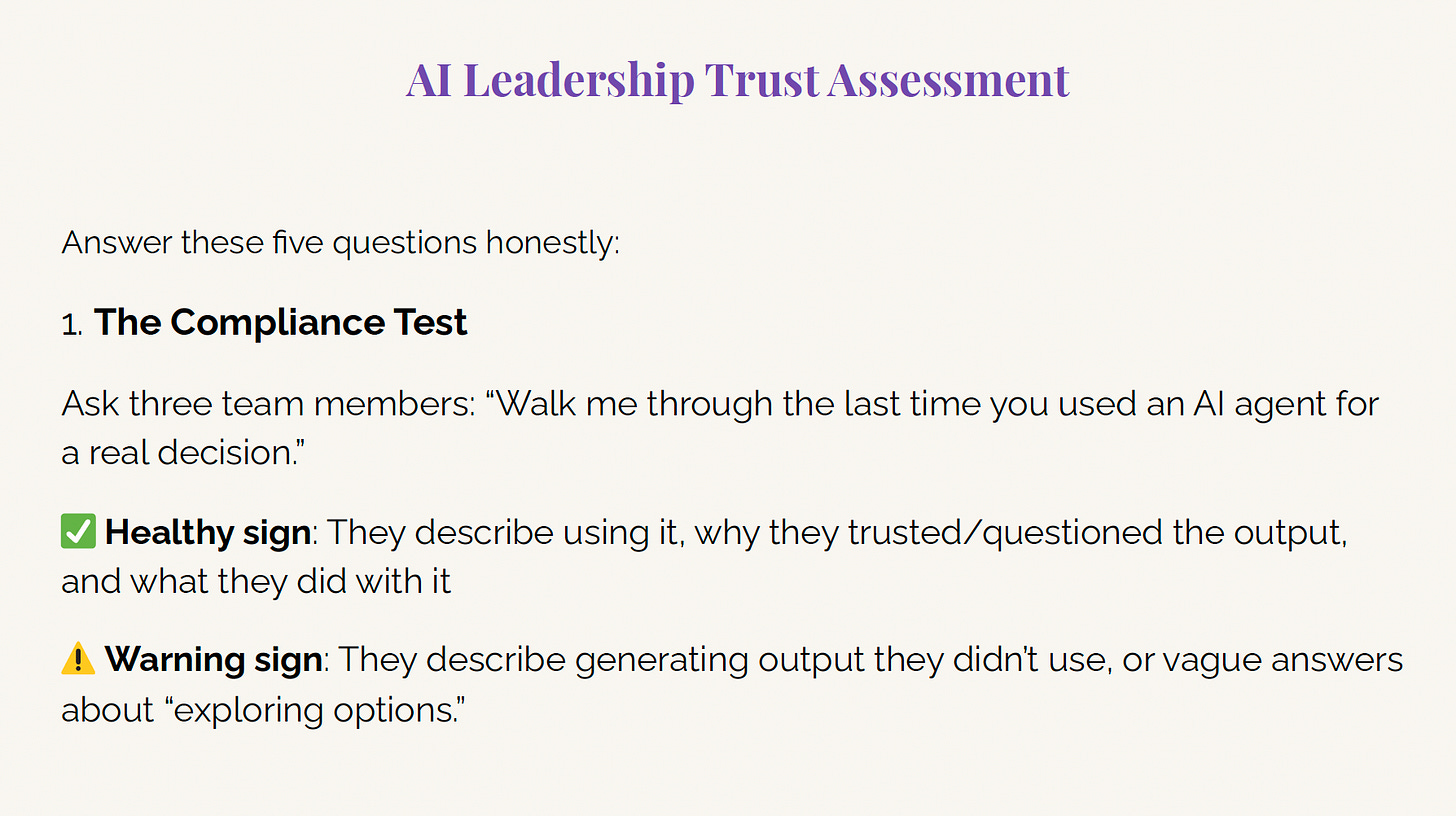

If you’re leading AI transformation in B2B SaaS marketing, these are the questions that will tell you whether you’re building for adoption metrics or building for trust:

Are you measuring adoption or confidence?

High activation rates might just mean people are afraid to say no. Look for behavioral signals: Are people acting on agent recommendations, or generating outputs they never use? Are decisions speeding up, or slowing down despite “high adoption”?

Can your team explain your AI rules in 60 seconds?

If not, they’re guessing—and protecting themselves through shadow work. Ask three people on your team right now: “What requires human approval vs. what can an agent handle autonomously?” If you get three different answers, you have an ambiguity problem. Ambiguity equals risk.

Do your best people feel safe to override AI recommendations?

If not, you’ve accidentally told them their judgment doesn’t matter. Watch who’s going quiet in meetings. Those are often your strongest performers realizing their expertise feels devalued.

What happens when AI fails, and who’s accountable?

If that’s not explicit, your team is carrying that uncertainty alone. Every agent recommendation becomes high-stakes because no one knows what happens if they approve something that fails.

Have you named what AI can’t replace?

If not, your team is wondering if they still matter. Be specific. Don’t say “your creativity is important.” Say “AI can’t read a CIO’s hesitation in a sales call. You can. That’s why you’re here.”

Alysa’s transformation started when she stopped optimizing for dashboard metrics and started designing for human trust in a high-stakes B2B environment.

Is Your Team Trusting AI? The 5-Question Diagnostic

To design AI leadership for human trust, you first need to know where your team stands. Download this assessment to learn how to get you—and your team—on a healthy path.

The Real Bottom Line

In 2026, AI agents will become table stakes in B2B SaaS marketing. The tools will work. The technology will be proven. The integrations will be solid. The question won’t be whether to adopt AI.

The question will be whether your team trusts you enough to rely on AI when the stakes are enterprise deals, long sales cycles, and brand credibility.

Alysa learned this the hard way: You can’t outsource trust to a dashboard, you can’t automate psychological safety, and you can’t generate confidence with a training deck.

AI adoption stalls when leadership hasn’t made judgment under uncertainty safe.

The five practices Alysa applied—establishing boundaries, leading with honesty, protecting human strengths, operationalizing trust, and exercising restraint—aren’t about AI strategy.

They’re about human-centric leadership design in an environment where:

A single generic whitepaper can kill a six-month enterprise deal

Sales needs to defend every campaign decision in pipeline reviews

Your brand is your primary competitive asset

Buyers evaluate you across committees of 6-8 people over 12-18 months

In B2B SaaS, leadership design isn’t a nice-to-have. It’s the difference between AI that accelerates your business and AI that creates a compliance charade that exhausts your team.

The technology is ready.

The question is: Are you?

A Note on This Case

This case study is a composite drawn from multiple B2B marketing leaders I’ve coached through AI and technology transformation. Details have been modified to protect confidentiality, but the patterns, tensions, and breakthroughs are real.

The five practices referenced throughout come from The Discipline of Staying Human: AI Leadership Framework for 2026, which you can download here.

Next month, we’ll deep-dive into Practice 4: Operationalize Trust—including diagnostic tools, templates for building trust systems, and a complete playbook you can adapt for your organization.

For now, chime in: Which practice resonates most with where you are right now?

Sources Referenced

The patterns described in this case align with emerging research on AI adoption challenges:

McKinsey, The State of AI (2025): AI adoption often plateaus at decision rights, not deployment

Harvard Business Review, Executives Overestimate AI Readiness (Nov 2025): Executives consistently overestimate employee enthusiasm and underestimate trust gaps

UK Parliament POST, Artificial Intelligence and Employment (Dec 2025): Psychological safety is critical to surfacing errors in automated systems

KPMG, AI Quarterly Pulse (2025): Trust increases when accountability and recourse are explicit

Deloitte, Tech Trends: Agentic AI (2025): Strategic restraint is emerging as a maturity signal in enterprise AI